CS348B Final Project

Group: Matthew Everett and Jeffrey Mancuso

"Reptiles"

|

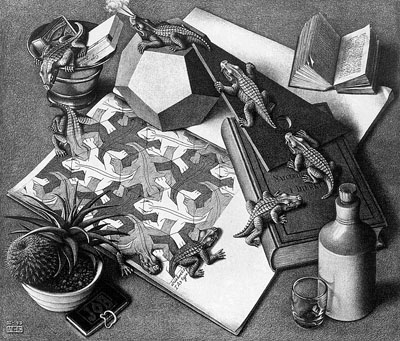

M.C. Escher's pencil drawing of

Reptiles |

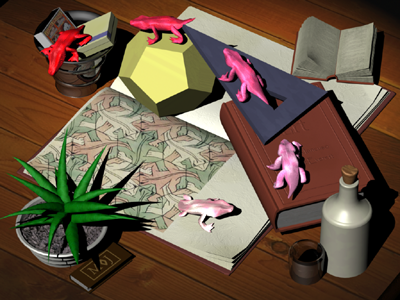

Our rendering of Reptiles |

For our final project we chose to render Escher's Reptiles drawing.

The primary goal of this project was to create the most realistic image

possible while remaining true to the original work.

Below are the final images we rendered at 100 samples per pixel. The

above black and white image was converted to grayscale, gamma corrected

and had a slight increase in aspect ratio from the final rendering on

the left.

Original

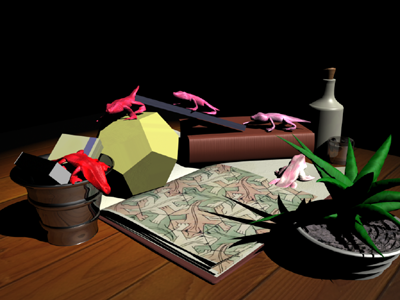

perspective

Side view

In order to create a realistic image, as well as make it as close to Escher's

drawing as possible, we implemented a number of enhancements to LRT.

Tilt-Shift Lens

Vanishing points in photographs can be distracting because the

perspective of the image is correctly only when looking at the center of

projection. Escher drew "Reptiles" with an overhead viewpoint

looking down to the ground plane. Objects that are perpedicular to the

ground, such as the shot glass and the bottle, are completely vertical

in the drawing. A standard perspective transformation would normally

create a distortion so that the bottle and the shot glass would be bent

towards the right. In order to fix this, we implemented a tilt-shift

lens - common to view cameras and high end 35mm systems.

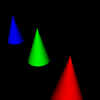

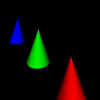

The perceived distortion of vanishing points is evident in the

following image:

The tilt-shift lens solves this problem by effectively rotating the film

plane to be perpendicular to the ground plane. The vanishing points then

disappear, as does the perceived distortion. We simulated this effect by

allowing graphic artists to add a "pitch_angle" parameter to

perspective transformations that rotates the view of the camera without changing

the orientation of the film plane.

This portion of the project was implemented by changing the parameters passed

to the Frustum transformation. The center of the image is rotated about the eye

point by pitch_angle degrees. The extent of the frustum in the y direction is

then expanded to maintain the original field of view. The distortion of the

previous image is eliminated in the corrected version:

The cones now all point upward, as would be expected by someone looking

directly at one of the cones.

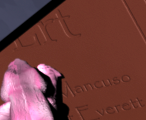

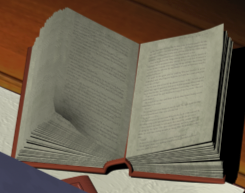

Bump Mapping & Multi-Texturing Enhancements

In order to make the image as realistic as possible we implemented bump

mapping. A bitmap is used to represent the heights of bump offsets. These bumps

can be amplified or even inverted using a bumptextureamount variable. The bump

mapping code was placed in the shade context so that it is accessible from all

shaders and to make it apparent during recursive reflections. Multi-texturing is

also in place to allow for more realistic objects. Nearly every object in the

scene was textured in one way or another. Without this, many things had a

plastic feel to them that detracted from the scene.

Embossed names |

Paper grain simulation |

Bump Mapping and Multiple Textures

are used in this book to add realism.

"Iridescent" Surfaces

Since we were unable to perfectly model the reptiles, we decided to enhance

their appearance with an Iridescent shader. Without implementing an actual

simulation of iridescent effects, we developed a multicolored, anisotropic,

view-dependent surface shader. It gives the impression of having a

wavelength-dependent surface. The surface was implemented by combining the

information from the [s, t] coordinates for vertices with the cosine of the

half-angle to form a series of swirling spots on the surface. These spots have a

center of color as well as a center of visibility. The spots are generated

randomly using a repeatable random number algorithm. Areas not covered by these

swirling spots are filled in by a separate diffuse color. The following image

demonstrates the final surface:

Parallel Rendering

We also modified lrt to run on multiple machines simultaneously through the

standard MPI interface. One node is designated the master node and collects the

sample data from the other nodes. The other nodes are each assigned a block of

samples to evaluate. When these nodes are done, they send them via MPI to the

master node, which adds them to the image and writes the image once the samples

are collected. We achieved qualitatively faster rendering speeds on clusters

than we would have otherwise. An quantitative evaluation of the speedup remains

to be done.

Light Field Viewer

We modified lrt to produce "light fields," which are collections of

samples rather than images. These collections can be used to simulate several

interesting effects. Our light field renderer/viewer has the following features:

- Parallax Scrolling

- Depth of Field

- 4D Interpolation

- 4D Supersampling

- Progressive Rendering

Interface

The interface has several controls:

- Enable Interpolation: When on, the system does 4D interpolation for each

point on the image. When off, the system uses the nearest neighbor.

- Enable Depth of Field, Depth of Field, Focal Distance: These options

change the depth of field parameters for the system. "Depth of

Field" is equivalent to 1 / F-Stop.

- Translate: These options move the eye around the scene. Note that depth of

field will not work as well near the edges of the light field.

Examples

These images are rendered from a light field consisting of a 16x16 array of

100x100 pixel images. Note the supersampling in the original images that allows

the light field viewer to generate high-quality images from these small initial

images. Gray pixels indicate points where sampling was unsuccessful.

Depth of Field

Near Object in Focus

|

Far Object in Focus

|

Parallax Scrolling

Left and Down

|

Right and Up

|

Interpolation

No Interpolation

|

Interpolation

|

Downloads

LF View:

LRT & Reptiles Scene

Source and Scene (7 megs)

|